A/B testing creatives for ASO is the most reliable way to move from assumptions to evidence. Instead of guessing which icon, screenshot, or message will resonate, you can systematically validate what actually drives taps, installs, and user engagement.

But the impact goes beyond organic growth. The same insights that improve your app store performance can be leveraged across paid user acquisition channels, making A/B testing a central building block of any efficient mobile growth strategy.

In this guide, we break down why A/B testing app store creatives is essential for ASO, how to run experiments using Google Play Listing Experiments and App Store Product Page Optimization (PPO), and what testing frameworks & ASO best practices leading teams follow to scale experimentation. All of this is backed by real A/B testing case studies from Applica, which works with leading mobile brands. Let’s explore how to build a high-impact A/B testing approach for app store creatives in 2026.

What is A/B testing for ASO

A/B testing for ASO is the process of comparing two or more variations of app store creatives, such as icons, screenshots, or videos in order to determine which version drives better performance, typically measured by conversion rate.

In a regular app store A/B testing experiment:

- Version A (control) represents your current store listing

- Version B (variant) introduces a specific change (e.g., new icon or different messaging on a screenshot)

- Traffic is split between versions

- Performance is measured based on user behavior (CTR, CVR, installs)

The goal is to isolate the impact of a single variable and identify which creative elements increase user engagement and installs.

Key metrics in A/B Testing app store creatives

- Click-Through Rate (CTR): Percentage of users who tap on your app after seeing it in search or browse

- Conversion Rate (CVR / CR): Percentage of users who install after visiting your product page

- Install Volume: Absolute number of installs driven by each variant

- Retention (Day 1+): Ensures that improved conversion does not come at the expense of user quality.

Why A/B testing creatives for ASO matters

Unlike subjective design decisions based on gut feeling alone, A/B testing introduces statistical validation into creative optimization. Instead of guessing what works, growth teams can rely on measurable user behavior to guide their decisions.

This is especially critical because:

- First impressions on app stores are formed in milliseconds

- Small improvements in CVR compound at scale

- Incorrect creative decisions can negatively impact both organic and paid performance.

Why is app store A/B testing important for your organic growth?

App Store and Google Play algorithms increasingly rely on behavioral signals such as conversion rate, click-through rate (CTR), and retention. While exact ranking factors are not publicly disclosed, multiple industry studies consistently show a strong correlation between optimized app listings, improved conversion rates, and higher rankings. So here are some reasons why you should A/B test your app store creatives in case you’re still in doubt.

1. A/B testing creatives for ASO can help you improve your App Store ranking

Conversion rate is widely considered a key indirect ranking signal. When more users install your app after viewing your listing, app stores interpret this as relevance and quality.

- Higher CVR → stronger engagement signals

- Stronger signals → improved rankings

- Better rankings → more organic installs

A/B testing app store creatives enables you to systematically optimize these signals.

2. A/B Testing can help you increase the conversion rate

Creative optimization is one of the highest-impact levers for improving conversion rate.

A/B testing creatives can drive conversion uplifts of 3-20%, depending on app category and baseline performance.

App store A/B testing helps you:

- Validate messaging angles (feature-led vs. benefit-led)

- Compare design styles (minimal vs. multiple elements)

- Optimize visual hierarchy and storytelling

Even a small, CVR uplift can translate into significant incremental installs at scale.

3. A/B testing app store creatives can help you improve your CTR

CTR is critical in search results and browse placements, where users make rapid decisions.

According to Storemaven, up to 60% of users decide whether to explore an app based on the first impression (icon + first screenshot).

Testing helps you:

- Improve tap-through behavior

- Increase qualified traffic

- Strengthen top-of-funnel performance.

4. A/B creatives for ASO can help you reduce your CPA

Improving your app store conversion rate has a direct impact on paid performance.

Higher conversion efficiency leads to lower acquisition costs across channels.

- Higher CVR → more installs per click

- More installs → lower CPI

- Lower CPI → improved ROAS.

5. A/B testing can help you improve your brand awareness

Your creative assets are not just essential elements of the app store product page or conversion tools. They shape your brand perception among users.

Testing helps you:

- Identify the most resonant visual identity

- Align messaging with user expectations

- Strengthen differentiation

Over time, when a user sees your app listing, they recall the brand recall and are more inclined to download the app.

6. App store A/B testing helps you optimize custom product pages with data

A/B testing provides valuable, evidence-based insights that can be directly applied to your CPPs. Instead of relying on assumptions, you can identify which features, messages, and visual elements resonate most with users, and then tailor your custom product pages accordingly.

By understanding what drives conversion, you can:

- Highlight the most compelling features for different audiences

- Align creatives with specific user intents (e.g., search keywords or campaign themes)

- Position your core value proposition more effectively.

This is particularly important for custom product pages, where relevance is key. Insights from A/B testing allow you to create more targeted, high-converting CPPs that match user expectations and improve overall performance across both organic and paid channels.

What you can A/B test on app stores

While both the App Store and Google Play serve the same purpose as marketplaces for apps, they differ significantly in how their algorithms rank apps and in the types of listing elements you can test.

What you can test on the App Store

- App icon: The icon is a primary driver of CTR. Changes in color, contrast, and recognizability can significantly influence user engagement.

Еxpert tip: Sometimes even a small change makes a huge difference. With a strong hypothesis, you don’t need to rework your icon completely.

Below you can see an icon A/B test that Applica ran for Realized. The variant tested contained a more voluminous version of the same visual on the icon.

The new icon variant showed an average conversion rate improvement of +8.6% (with the exception of the first 3 days).

- Screenshots (order, design, messaging): The first 2–3 screenshots are critical. You can test narrative structure, copy density, and visual hierarchy. Most users do not scroll beyond the initial frames (source: Storemaven).

- App preview videos: Videos can improve conversion for complex apps but may reduce performance if the value proposition is unclear. Testing video vs. no video is essential.

- Feature highlights: Different features resonate with different audiences. Testing helps identify what actually drives installs.

- Value propositions: Messaging variations (e.g., “Save time” vs. “Boost productivity”) can yield significantly different results.

- Localization variants: Adapting creatives to local markets can improve performance. According to Google, localization can increase conversion rates by up to 20%.

What you can test on Google Play

- App icon: A key CTR driver that benefits from rapid iteration via experiments.

- Screenshots: Enables testing of storytelling approaches and visual styles.

- Promo videos: Particularly effective in gaming and entertainment categories.

- Short description: Highly visible and critical for conversion; should be tested for clarity and keyword alignment.

- Long description: Contributes more to discoverability and keyword relevance than conversion.

- Feature graphic: A prominent visual unique to Google Play that strongly impacts first impressions.

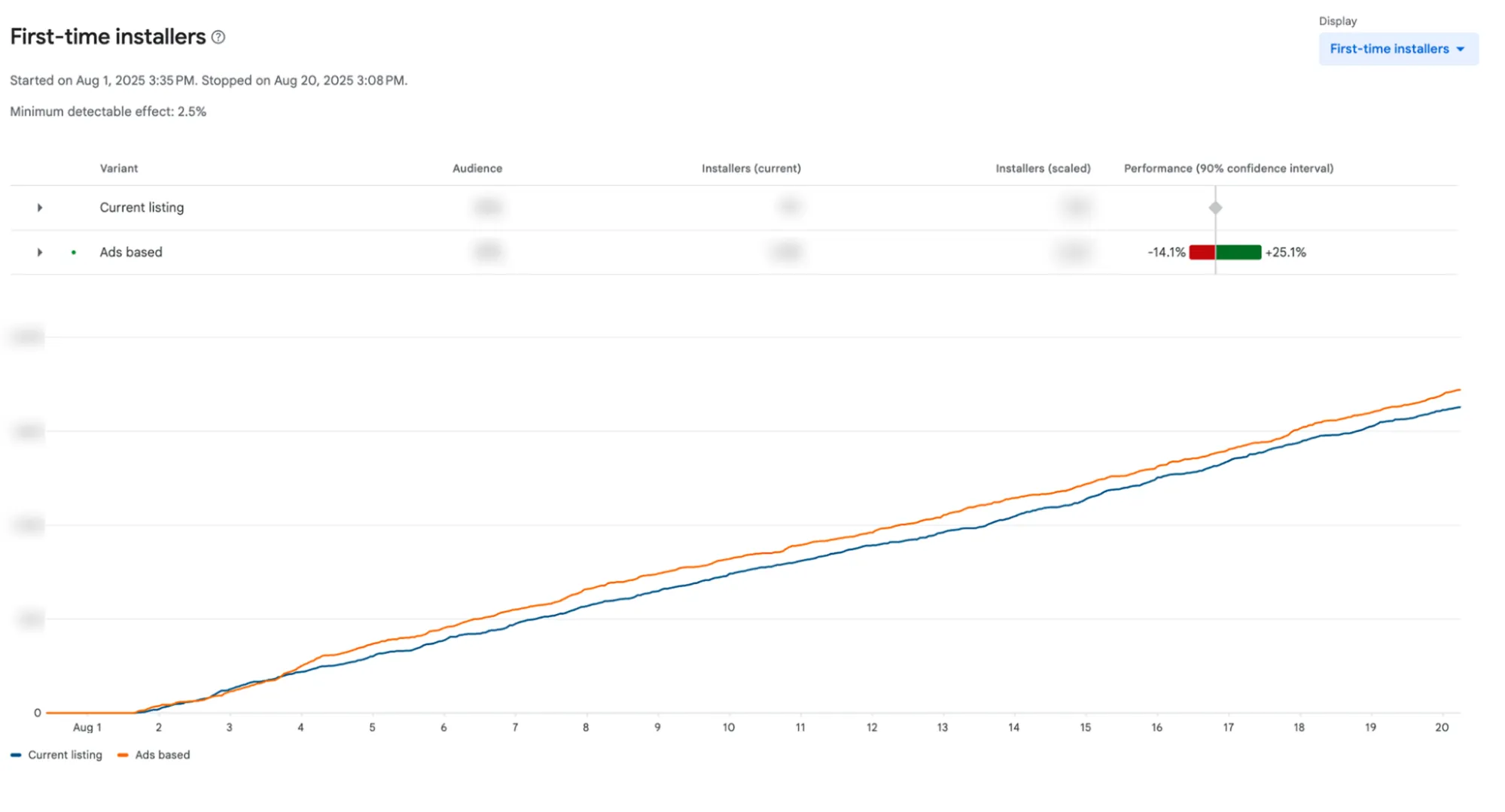

The Example of Feature Graphic creative test (Applica × Dogo)

Speaking about feature graphic, beyond design, you can test how the feature graphic is used within the overall store experience: whether it acts as a supporting visual, a video placeholder, or a primary value communication asset.

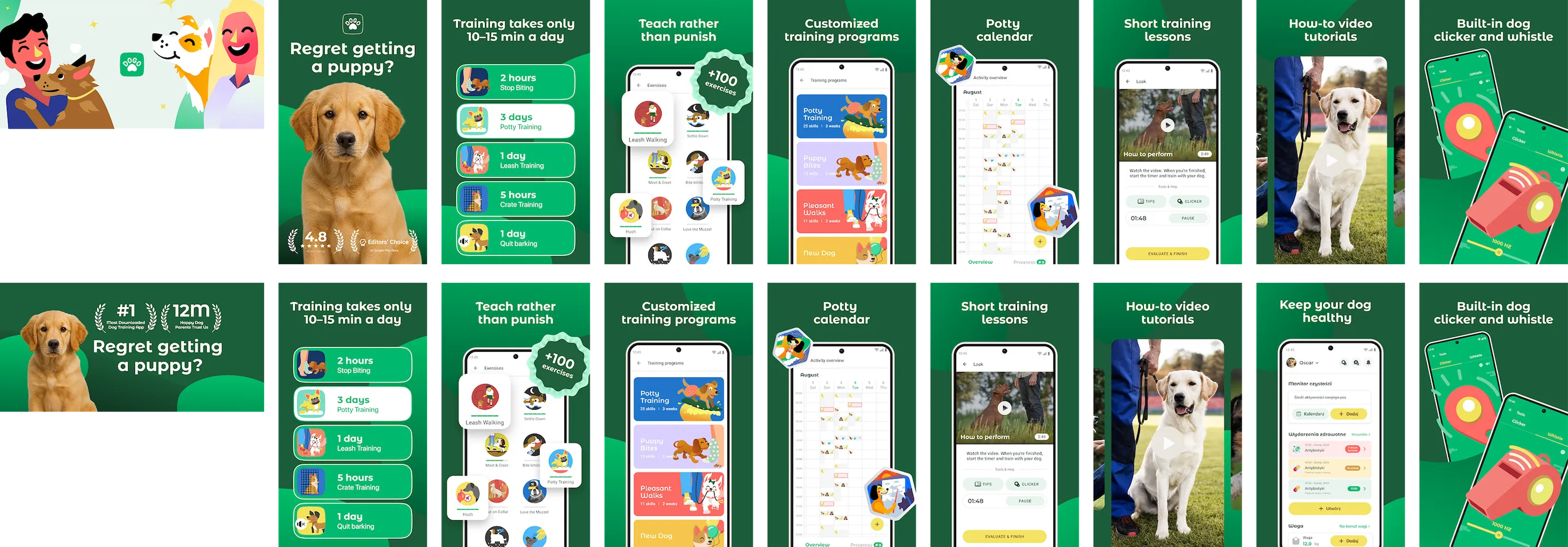

Applica’s team ran an A/B test for Dogo, a dog training app, focusing on the role and messaging of the feature graphic on Google Play (US market).

Context:

Since the page already included a video, the feature graphic effectively acted as a static fallback and entry point into the screenshot sequence. However, the initial setup created a flow where users were exposed to two consecutive promotional visuals (feature graphic + first screenshot), delaying clear communication of the app’s value.

Hypothesis:

Avoid using two promotional visuals back-to-back and instead start communicating what the user will get from the very first screen. The team applied a problem–solution approach, similar to what had already proven effective on iOS.

What changed in the variant:

- The feature graphic was redesigned to communicate the core value proposition immediately

- Messaging shifted to a problem–solution format

- The narrative started from the very first visual

Result: The test delivered a +11% conversion rate uplift and showed a stable positive trend, confirming the effectiveness of front-loading value.

Expert tip: Don’t waste your first visual on generic promotion. Start with clear, outcome-driven messaging from the very first touchpoint to improve user understanding and conversion.

Google Play Listing Experiments and App Store PPO

Both Apple and Google provide native A/B testing tools, but they differ significantly in flexibility, methodology, and strategic use.

Google Play Store Listing Experiments

Google Play offers one of the most advanced tools ASO A/B testing.

What you can test with Google Play’s in-built A/B testing tool:

- App icon

- Screenshots

- Feature graphic

- Promo video

- Short description

- Long description

How it works:

Traffic is split across variants, and performance is measured based on install conversion rate.

Google provides:

- Conversion uplift

- Confidence intervals

- Probability of outperforming baseline

Why it matters:

- Supports A/B/n testing (multiple variants)

- Enables localization testing

- Allows testing of both creatives and metadata

Tip: Google Play with its Store Listing Experiments is ideal for full-funnel ASO experimentation, not just creative testing.

App Store Product Page Optimization (PPO)

Apple’s PPO is more constrained but still essential for mobile growth on the App Store.

What you can test:

- App icon

- Screenshots

- App preview videos

How it works:

Apple splits traffic and reports:

- Conversion uplift

- Confidence intervals

Key limitations:

- No metadata testing

- Maximum 3 variants

- Slower statistical significance for low-traffic apps

Strategic implication:

Many ASO teams pre-test creatives externally before validating them via PPO.

Tip: On the Apple’s App Store, prioritize larger creative changes over micro-optimizations to reach significance.

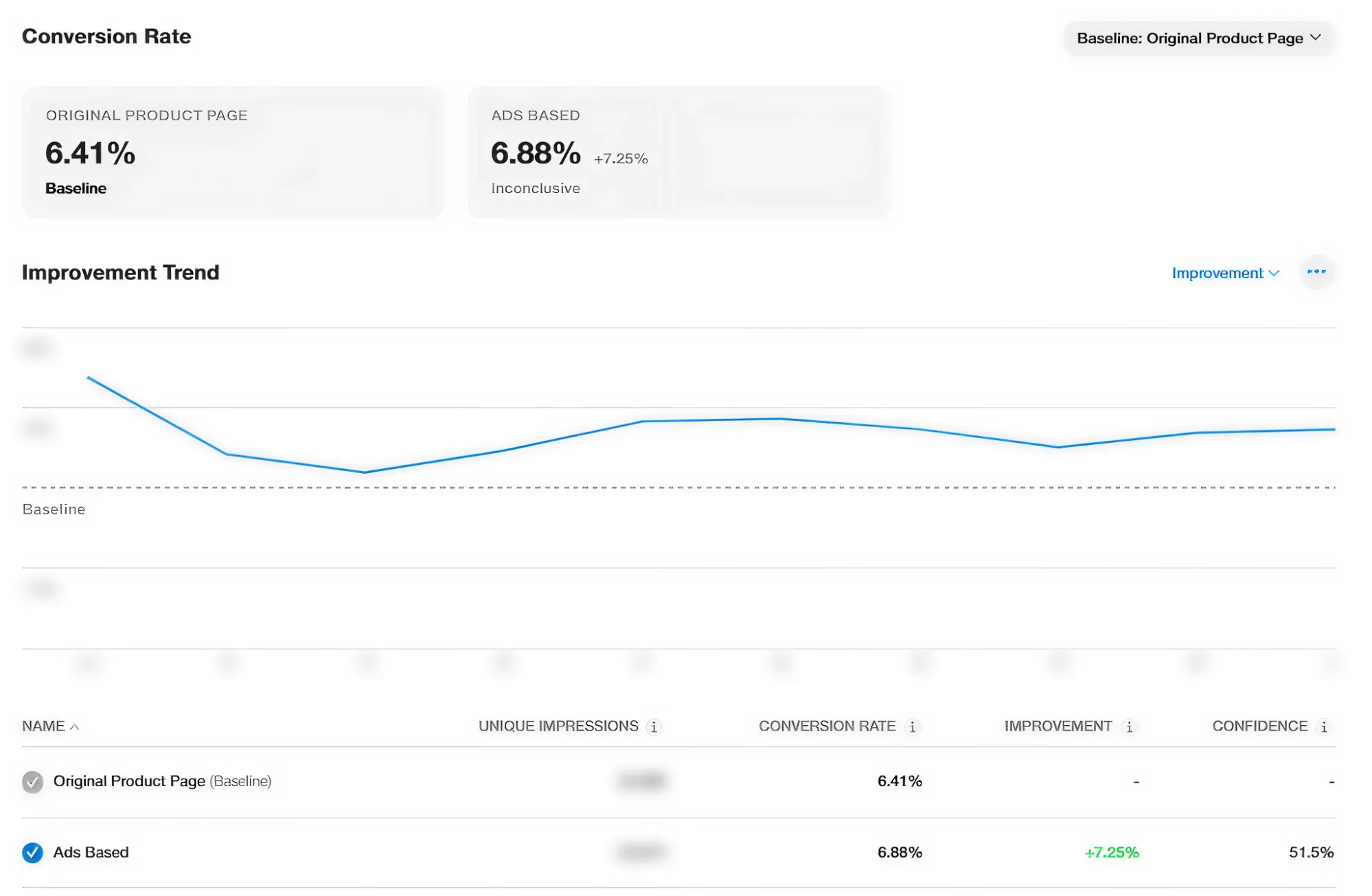

Real Example: Using Paid Creative Insights for App Store A/B Testing

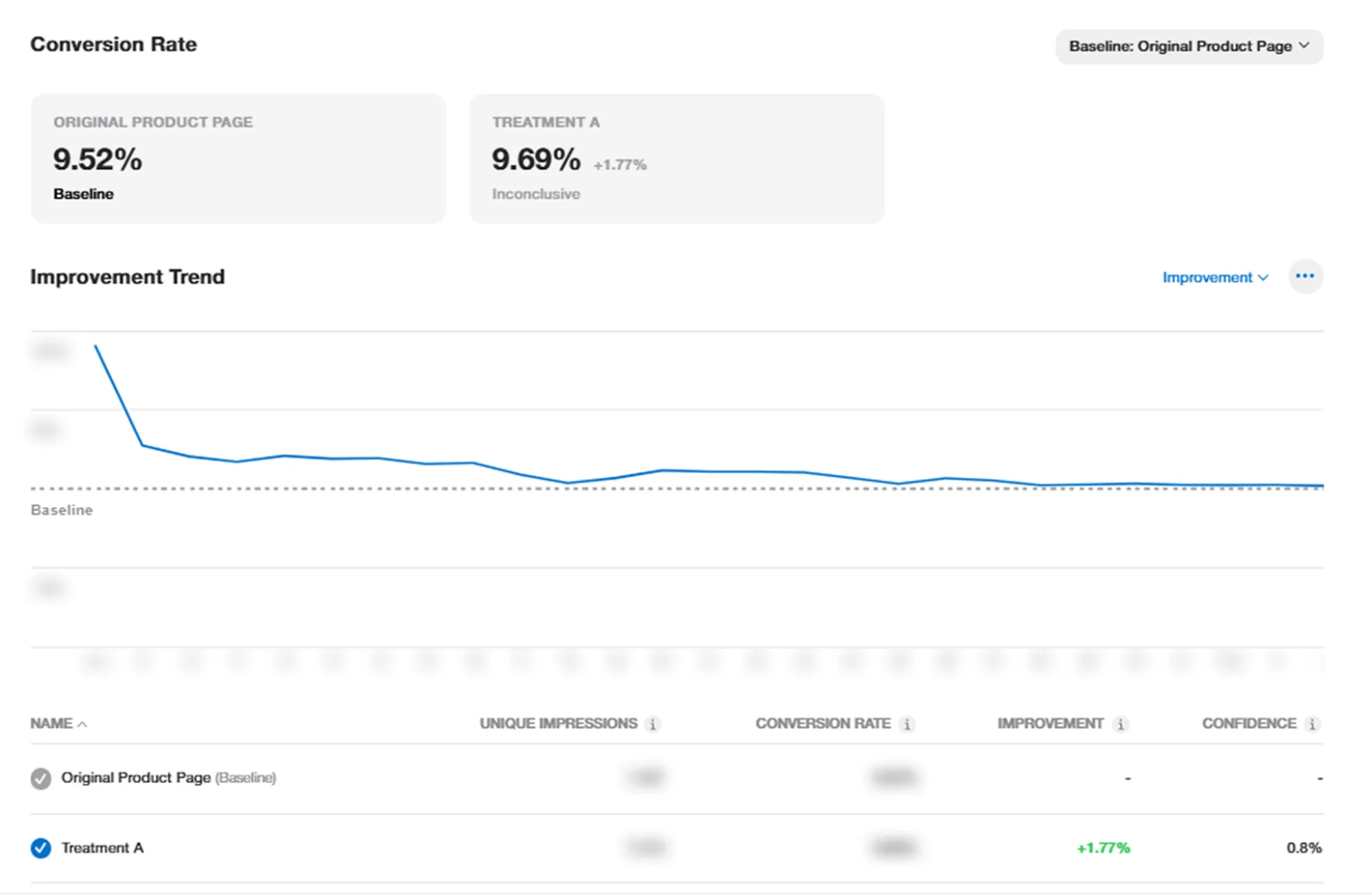

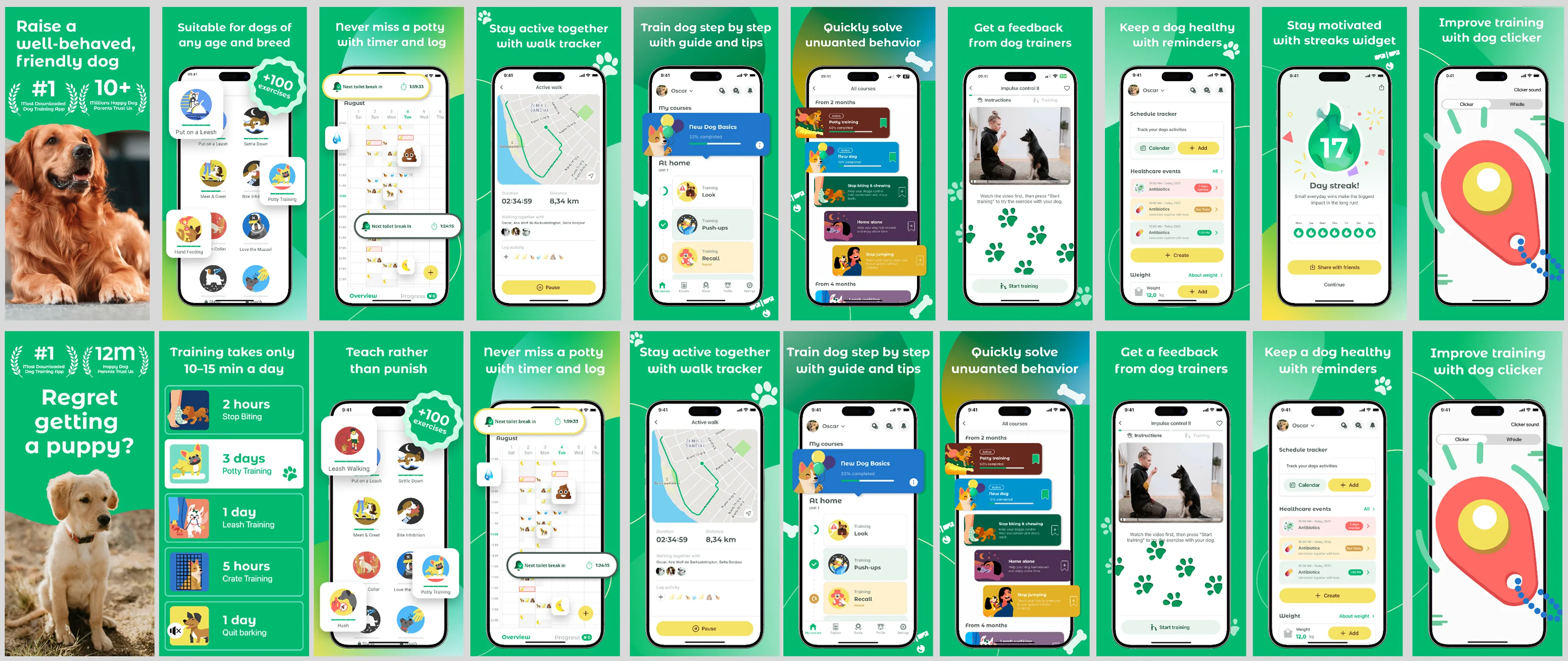

To illustrate how A/B testing insights can translate across channels, let’s look at a real Product Page Optimization (PPO) experiment conducted by Applica’s growth team for Dogo, a dog training app.

Experiment setup

- Platform: App Store (iOS)

- Tool: Product Page Optimization (PPO)

- Markets: English-speaking countries (US, UK, CA, AU)

- Traffic: All sources (organic + paid)

- Test iterations: 2 (first test stopped early due to insufficient confidence)

Hypothesis

The hypothesis, provided by the Dogo team, was based on top-performing paid creatives:

A more emotional, problem-led approach, combined with clear, outcome-driven solutions (e.g., “stop biting in 2 hours”) will outperform the existing feature-led creatives.

Creative strategy

Control (Previous Version):

- Feature-driven messaging

- Neutral, informative tone

- Focus on app functionality

Variant (Test Version):

- Emotion-first hook: “Regret getting a puppy?”

- Problem-solution framing

- Clear, time-bound outcomes (e.g., “2 hours”, “3 days”)

- Stronger visual hierarchy and storytelling

Results

The experiment delivered an average conversion rate uplift of +6.8% across tested markets.

This case proves that:

- Paid channels are a fast testing ground for creative concepts

- App store A/B testing is a validation layer for long-term impact

- The combination of both creates a compounding growth loop.

How to Run App Store A/B Testing Experiments for ASO: Creative Testing Framework:

A structured approach to A/B testing creatives for ASO is essential for reliable results. Here is the approach we follow at Applica, a full-cycle app growth agency.

Step 1: Prioritize: Choose Creative Elements to Test

Choose key creative elements to test, such as the app icon, first 2-3 screenshots, and the subtitle. These typically have the biggest impact on conversion, since they form the user’s first impression. While focusing on a single element can sometimes make sense (for example, isolating the icon to measure its impact precisely), in practice, testing several elements together often helps uncover user behavior trends and speeds up learning.

Step 2: Formulate a Hypothesis

Before running the test, define why you expect the change to impact CTR. For example: “A more vibrant color palette will make the app icon stand out in search results, drawing attention away from competitors and increasing tap-through rate.”

Step 3: Test Setup

Use reliable platforms or native store experiments to run controlled A/B tests. These platforms simulate real user behavior and deliver actionable insights.

Step 4: Analyze Results

Don’t stop at surface-level numbers. Ensure your findings are backed by statistical significance and be wary of stopping tests too early: short-term spikes may not reflect long-term performance.

Step 5: Implement

Roll out the winning creative to your live app store listing so it starts delivering real conversion gains.

Step 6: Iterate

Treat each winning variation as your new baseline and continue testing to compound improvements over time.

Tip: A/B testing, like ASO, is a continuous process if you’re aiming for app store conversion rate optimization.

A/B Testing for ASO Beyond the App Stores

To accelerate experimentation cycles, many teams validate creatives outside the stores before deploying them.

Lookalike audiences on paid social (e.g., Meta)

- Test multiple creative angles

- Use lookalike audiences to simulate real users

- Measure CTR and conversion proxies

This approach enables faster iteration and reduces risk.

Other channels for ASO creative testing

1. TikTok Ads

Fast feedback loops and strong engagement signals make TikTok ideal for testing hooks.

2. Custom product pages & Apple Ads

Allows testing creatives aligned with specific search intent.

3. Programmatic & Display

Useful for validating broad appeal across audiences.

Best practices for A/B testing creatives for ASO

To get reliable, actionable insights from A/B testing, it’s not enough to run random experiments. Following structured ASO best practices ensures that each test drives meaningful improvements in conversions, user quality, and overall mobile app growth.

Start with a testable hypothesis, not a design preference

Every experiment should be driven by a clear assumption about user behavior. For example, instead of “this design looks better,” frame it as “highlighting social proof in the first screenshot will increase conversion rate.” This ensures your tests generate actionable insights. At the end of the day, they either prove your hypothesis right or wrong, and both are incredibly valuable.

Prioritize high-impact assets (icon & first screenshot)

The app icon and first screenshot drive the majority of user decisions, especially in search results. Even optimizing just these elements, you can positively impact CTR and CVR.

Test one major variable at a time, but make it meaningful

While isolating variables is essential for clean data, the change itself should be substantial enough to influence behavior. Small tweaks (e.g., very minor color changes) often require large volumes to detect impact, whereas bold, visible changes produce clearer and faster learnings.

Tip: While testing one variable at a time is a core principle, sometimes meaningful impact comes from rethinking the entire visual approach.

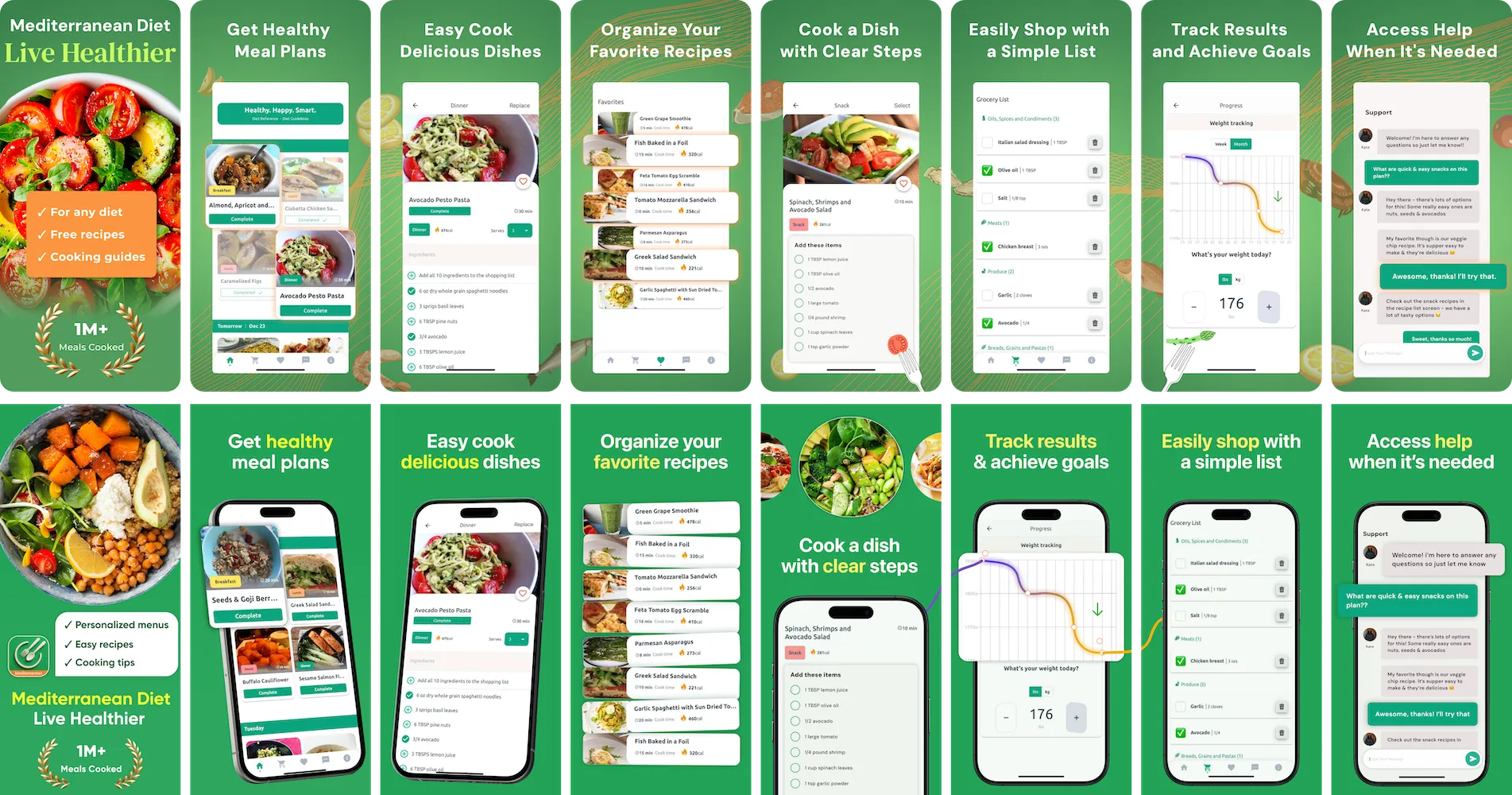

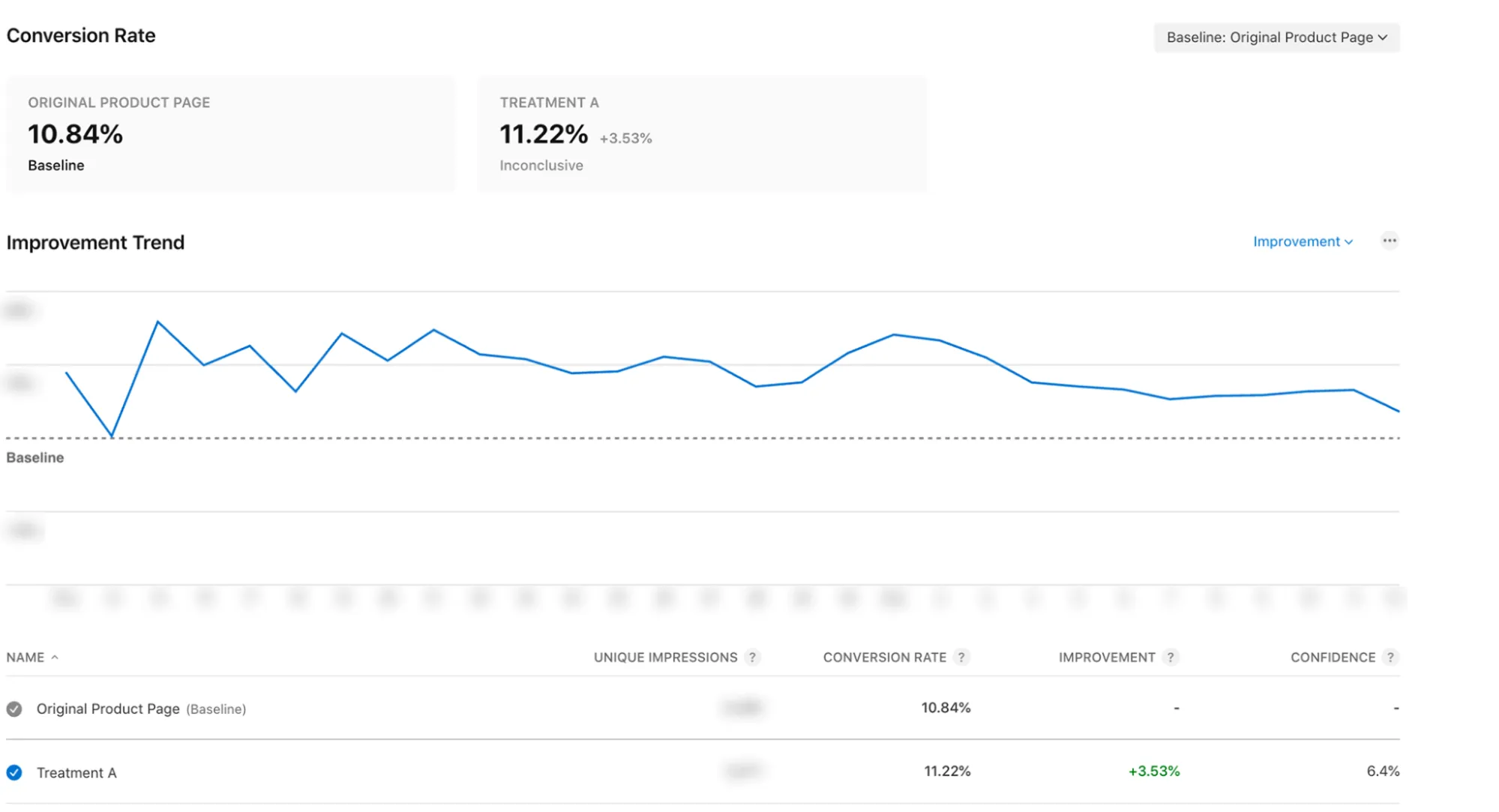

Real example: how Realized improved CVR by simplifying screenshot design

Applica’s growth team ran an A/B test for Realized, focusing on improving the readability and clarity of app store screenshots across all languages (with the majority of traffic coming from English-speaking markets, particularly the US).

Hypothesis

Simplifying the visual design by removing gradients, reducing background noise, and improving text readability will make screenshots easier to scan and increase conversion rates.

What changed

Before (Control):

- More complex backgrounds with gradients

- Higher visual density

- Lower text contrast and readability

After (Variant):

- Clean, simplified backgrounds (no gradients)

- Stronger contrast and clearer typography

- Better focus on key messages and UI elements

- Reduced visual noise

Results

The test delivered an average conversion rate uplift of +9.5% (excluding the first 3 days to avoid early volatility).

Key insight

Clarity beats complexity.

Users don’t analyze screenshots in depth: they scan them quickly. Improving readability helps communicate value faster, which directly impacts conversion.

Strategic takeaway

This case reinforces an important nuance in A/B testing:

Sometimes, the biggest gains come not from small tweaks, but from testing entire design approaches.

Testing directions like:

- Minimalistic vs. detailed design

- Clean vs. decorative backgrounds

- Different typography and layout styles

can unlock significantly stronger performance improvements than incremental changes.

Run experiments across full behavioral cycles

User behavior differs between weekdays and weekends. Running tests for full weekly cycles (7 or 14 days) ensures your data reflects real usage patterns and avoids skewed results from short-term fluctuations.

Rely on statistical significance, not directional trends

A variant should only be considered a winner if it demonstrates consistent uplift with strong statistical confidence. In practice, many teams use a minimum threshold ( ~5% uplift) to filter out noise and avoid false positives.

Adapt your testing strategy to traffic volume

If your app has limited traffic, focus on simple A/B tests (two variants) to reach significance faster. High-traffic apps can afford more complex A/B/n or multivariate experiments.

Validate high-impact changes with confirmation tests

For critical assets like icons, it’s best practice to re-test winning variants (e.g., B vs. A again). This helps confirm that results are consistent and not influenced by seasonality, external factors, current trends, or statistical anomalies.

Balance speed and quality in creative production

Over-polishing early test variants slows down your experimentation velocity. Instead, aim for “good enough to test” and iterate based on performance data. Faster cycles lead to faster learning and better long-term outcomes.

Align ASO experiments with paid UA performance

Changes in creatives affect not only organic conversion but also paid campaign efficiency. Monitor metrics like CPI, CTR, and ROAS before, during, and after tests to capture the full impact across growth channels.

Measure downstream quality, not just installs

An increase in conversion rate is only valuable if it brings the right users. Track post-install metrics like Day 1 retention and early engagement to ensure your creatives set accurate expectations and attract high-quality users.

Build a continuous experimentation engine

The most successful apps treat A/B testing as an ongoing process, not a one-time effort. Maintaining a structured testing roadmap and backlog of hypotheses allows you to compound gains over time and stay ahead of competitors.

Conclusion

A/B testing creatives for ASO is not just a tactic, it’s a fundamental part of how modern mobile teams grow their apps.

Instead of relying on assumptions or subjective design decisions, A/B testing allows you to understand what actually resonates with users. It helps you improve conversion rates, strengthen your positioning, and ultimately drive more efficient growth across both organic and paid channels.

At the same time, there’s no single “winning formula.” What works for one app, audience, or market may not work for another. That’s why continuous experimentation is key. The most successful teams don’t run one test, they build a system where testing, learning, and iterating never stop.

As shown in the examples from Applica, even relatively simple changes, whether it’s refining messaging, improving readability, or rethinking how assets are used, can lead to meaningful uplifts in performance.

The key is to stay structured, move fast, and let data guide your decisions.

Need help with A/B testing: from generating hypotheses to designing, running, and analyzing experiments? The Applica team is here to help. Let’s talk!

FAQ

What is A/B testing for app store creatives?

A/B testing for app store creatives is the process of comparing different versions of visual assets, such as icons, screenshots, or videos, to determine which version performs better in driving user engagement and installs.

What can you A/B test in app stores?

You can A/B test a variety of elements, including app icons, screenshots, videos, feature graphics (on Google Play), and even text elements like descriptions. The goal is to identify which combinations of visuals and messaging maximize conversion rates.

What is the difference between A/B testing in ASO and general A/B testing?

A/B testing in ASO specifically focuses on optimizing app store listings to improve visibility and conversion. Unlike traditional A/B testing (e.g., on websites), ASO experiments are limited by platform constraints and rely heavily on creative assets and store-specific tools like Product Page Optimization (PPO) and Google Play Listing Experiments.

Why is A/B testing even more important in 2026?

As competition in app stores continues to grow, conversion rate has become a key differentiator. With more apps optimizing their listings, even small improvements can have a significant impact. In 2026, continuous experimentation is essential to stay competitive and adapt to evolving user expectations and store algorithms.

How long should an ASO A/B test run?

Tests should run for at least 7 days, or ideally full weekly cycles (e.g., 7 or 14 days) – to capture variations in user behavior and achieve statistical significance.

How often should I run A/B tests?

A/B testing should be an ongoing process. High-performing teams run continuous experiments, maintaining a steady pipeline of hypotheses and iterating based on previous results.

Does A/B testing affect my app’s ranking?

Indirectly, yes. By improving conversion rate and engagement signals, A/B testing can positively influence your app’s ranking over time, as app store algorithms tend to favor listings that are optimized and convert well.

What is the most important asset to test first?

The app icon and the first screenshot are the most impactful elements, as they drive the majority of first impressions and influence user decision whether to download or not.

What kind of results should I expect?

Results vary depending on your starting point, but according to some industry benchmarks, creative A/B testing can deliver conversion uplifts of up to 20-30%. However, even smaller, consistent improvements can compound into significant growth over time.

Can you A/B test on both app stores?

Yes. Google Play offers Store Listing Experiments, while the App Store provides Product Page Optimization (PPO), each with different capabilities and limitations.

Should you test outside app stores?

Yes. Testing creatives on external channels such as paid social allows for faster iteration and helps validate ideas before deploying them in app stores.

Can I apply A/B testing results to paid user acquisition (UA)?

Yes. Insights from A/B testing app store creatives can, and should be applied to your paid user acquisition campaigns.

High-performing creatives in the app store often translate well into paid channels because they reflect what resonates most with your target audience. Messaging, visual hierarchy, feature positioning, and value propositions that drive higher conversion rates on your product page can be reused or adapted for ads.

However, results may vary depending on the platform and audience intent. Users on paid channels (e.g., Meta or TikTok) are typically less intent-driven than app store visitors, so creatives may require slight adjustments in hook, pacing, or format.

The most effective approach is to:

- Use ASO A/B testing to identify winning concepts

- Adapt those concepts for paid formats (video, static ads, UGC)

- Validate and iterate further within each paid channel

This creates a feedback loop between organic and paid growth, where learnings continuously reinforce each other.